Unlocking Data for Everyone

Natural language AI is breaking the analytics bottleneck — giving business teams direct access to insights without waiting on analysts.

The data bottleneck that has plagued organizations for decades is finally breaking. While business teams have grown increasingly data-hungry, they've remained dependent on analysts to translate questions into queries, dashboards into decisions. But 2024 marked a turning point: natural language AI matured enough to handle real business complexity, and the economics of conversational analytics shifted from "nice to have" to "competitive necessity."

This challenge hits small and medium enterprises (SMEs) particularly hard. Demand for data analytics professionals will likely outstrip available talent for some time, while SMEs understand the benefits of data science but lack the expertise or resources to implement it. The result? Only 10% of SMEs have adopted advanced analytics, despite comprising 99% of businesses and employing 61% of the workforce.

Meanwhile, organizations implementing AI-driven analytics report 20% to 30% gains in productivity, speed to market and revenue, with some achieving 50% reduction in project lifecycles.

The transformation isn't just about faster answers — it's about democratizing data access for organizations that can't afford dedicated analyst teams.

When Language Becomes the New Interface

The shift from SQL to natural language represents more than technological progress — it's about democratizing insight generation across every business function. As Tristan Handy of dbt Labs notes, this evolution "isn't about replacing analysts — it's about enabling self-service" while maintaining expert oversight.

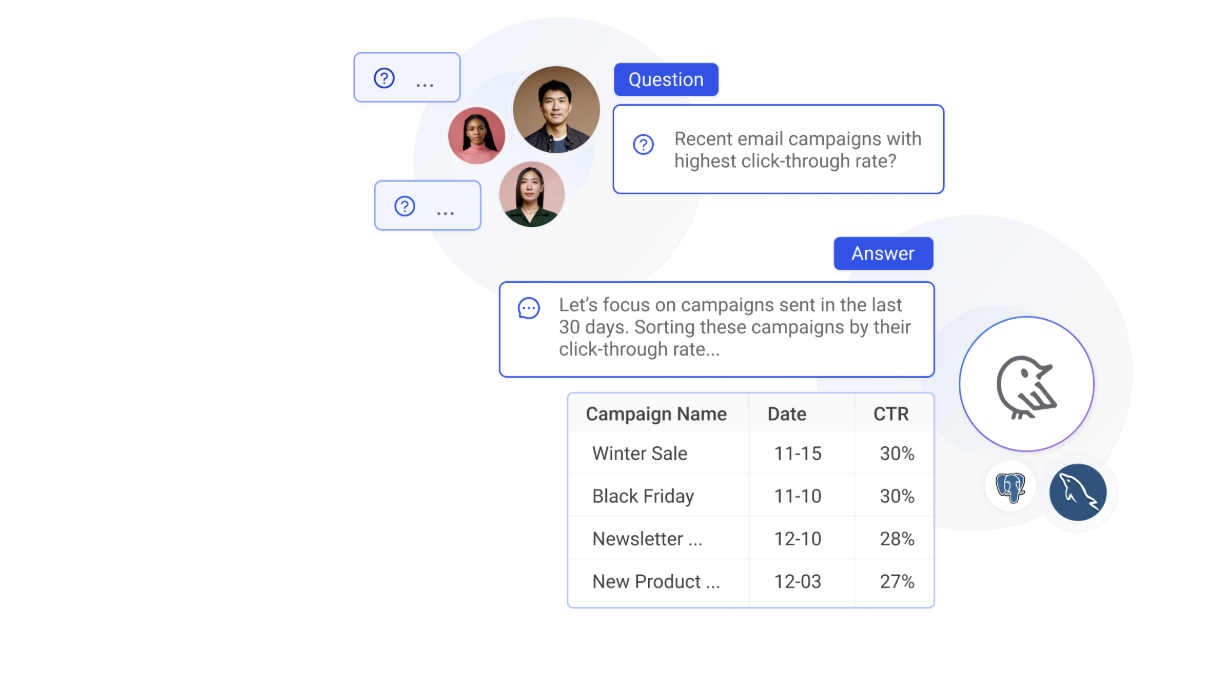

Today's solutions like Wren AI demonstrate this potential, translating English queries into governed SQL, charts, and contextual summaries within seconds. Their GenBI engine enforces data governance and semantic consistency behind the scenes, ensuring business users get accurate, trustworthy insights without technical barriers.

Similarly, platforms like Sequel enable natural-language queries across PostgreSQL, MySQL, ClickHouse, and other enterprise databases, maintaining compatibility while dramatically reducing time-to-insight.

The business impact is immediate:

- Decision cycles compressed from days to minutes

- Business teams operate with greater autonomy

- Data literacy spreads organically across functions

- Analytics teams focus on strategic rather than routine work

But this transformation demands more than deploying new tools — it requires rethinking how organizations structure, govern, and validate data-driven insights.

The Hidden Complexity Behind Simple Questions

Natural language AI exposes the ambiguity that has always existed in business requests, making previously hidden challenges visible:

Semantic precision matters more than ever. When a sales director asks for "active users," the AI must distinguish between weekly logins, feature engagement, or concurrent sessions — definitions that vary across teams and contexts.

Trust requires transparency. Business users need confidence that AI-generated insights align with the same definitions and logic that analysts use, complete with audit trails and data lineage.

Governance scales with access. As more users gain direct data access, permission controls, role-based restrictions, and compliance requirements become exponentially more complex.

Context drives accuracy. Behind every successful query lies sophisticated metadata, semantic frameworks, and domain-specific knowledge that the AI must access and apply correctly.

The organizations succeeding with conversational analytics aren't just implementing new interfaces — they're building systematic approaches to these foundational challenges.

The Infrastructure Behind Intelligent Conversations

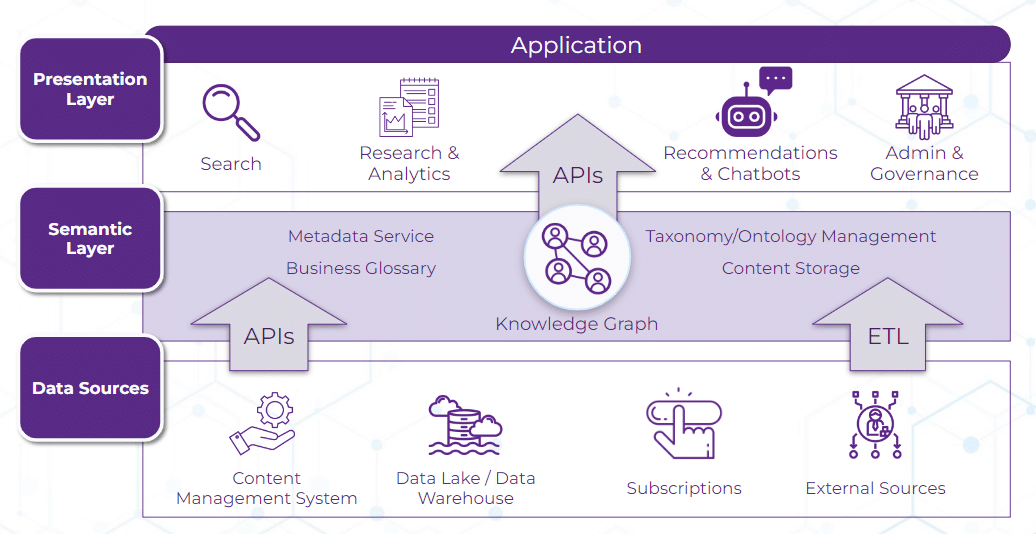

Leading organizations are investing in four critical capabilities that anchor natural language into reliable business logic:

-

Semantic Layers serve as the translation hub, converting business terminology ("Q2 revenue," "churn rate") into precise, governed database queries that maintain consistency across users and contexts.

-

Metadata-Aware AI Engines like dbt's Fusion or Snowflake's semantic views embed enterprise definitions directly into the AI's query logic, ensuring outputs reflect organizational standards rather than generic interpretations.

-

Human-in-the-Loop Validation preserves analyst oversight for complex queries, preventing AI "hallucinations" while building confidence in automated insights for routine requests.

-

Explainability and Audit Systems map every user query to complete data lineage — showing metrics, joins, filters, and transformations — ensuring full transparency and regulatory compliance.

These aren't just technical requirements — they're trust-building mechanisms that determine whether conversational analytics becomes a strategic advantage or a source of confusion.

Reshaping Roles, Not Replacing Them

This transformation redefines organizational capabilities rather than eliminating positions:

-

Data engineers architect the pipelines, metadata frameworks, and access controls that enable reliable self-service analytics.

-

Analytics engineers evolve into semantic architects, curating business definitions, maintaining conversational prompts, and ensuring AI outputs align with organizational logic.

-

Business users shift from consumers to collaborators, asking strategic questions, interpreting AI-generated insights, and providing feedback that improves system accuracy.

-

AI platforms facilitate these interactions through rigorous metadata management, embedded governance, and continuous learning from user patterns.

The most successful implementations create cross-functional squads where technical and business expertise combines to iterate quickly and share learnings widely.

Your Strategic Roadmap

Organizations ready to lead this transformation should approach conversational analytics as a systematic capability-building exercise:

1. Launch High-Impact Pilots. Select use cases with clear business value — marketing ROI analysis, regional sales comparisons, or customer behavior tracking. Measure both quantitative improvements (query speed, accuracy) and qualitative feedback from business users.

2. Establish Semantic Foundations. Collaborate across business and analytics teams to codify essential metrics into a central registry. Define "active user," "customer lifetime value," and other key terms with precision. This shared vocabulary becomes the foundation for trust.

3. Implement Governed Self-Service. Create analyst review checkpoints for complex queries while enabling autonomous access for routine requests. Every AI response should include transparent lineage — SQL logic, data sources, applied filters — ensuring accountability.

4. Build Learning Loops. Deploy usage tracking, error monitoring, and prompt effectiveness metrics. Use these insights to continuously refine the conversational layer, improving metadata quality and query accuracy over time.

5. Scale Through Skill Development. Equip data engineers with semantic modeling expertise and help analysts develop prompt engineering capabilities. Create small, cross-functional teams that can iterate rapidly and transfer knowledge across the organization.

The Competitive Advantage of Conversational Data

The organizations that master conversational analytics won't just have better tools — they'll have fundamentally different decision-making cultures. When any team member can instantly explore data relationships, test hypotheses, and validate insights, the pace of innovation accelerates dramatically.

This isn't about making data more accessible — it's about making every employee more analytically empowered. The companies that achieve this transformation won't just adapt to the future of data — they'll define it.