How AI Agents Store and Organize Knowledge

Memory architecture is shaping how AI becomes more context-aware, collaborative, and intelligent — from vector databases to knowledge graphs.

Memory in AI is not just storage — it's structure, strategy, and adaptability. As agents become more sophisticated, they must answer: What should I remember? How should I structure it? When should I forget? The design behind these decisions is called memory architecture — and it's shaping how AI becomes more context-aware, collaborative, and intelligent.

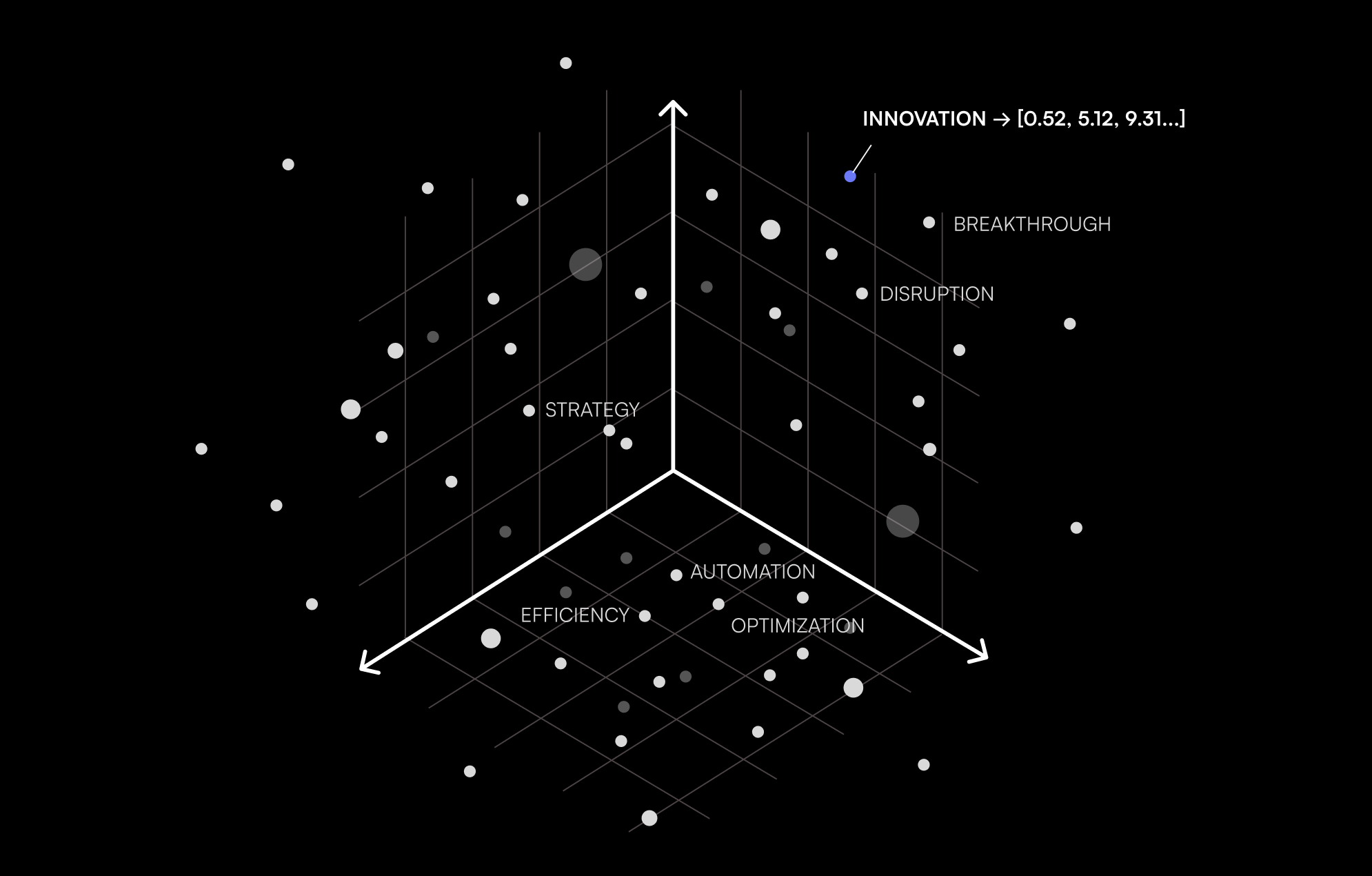

Semantic Recall with Vector Databases

Modern AI agents use vector databases to represent ideas as coordinates in a high-dimensional space. This allows them to identify semantically similar information — even if it's phrased differently. Instead of rigid keyword search, agents retrieve based on meaning, improving the fluidity and relevance of conversations. This system underpins tools like ChatGPT and Claude, making them responsive across interactions by tracking conceptual continuity.

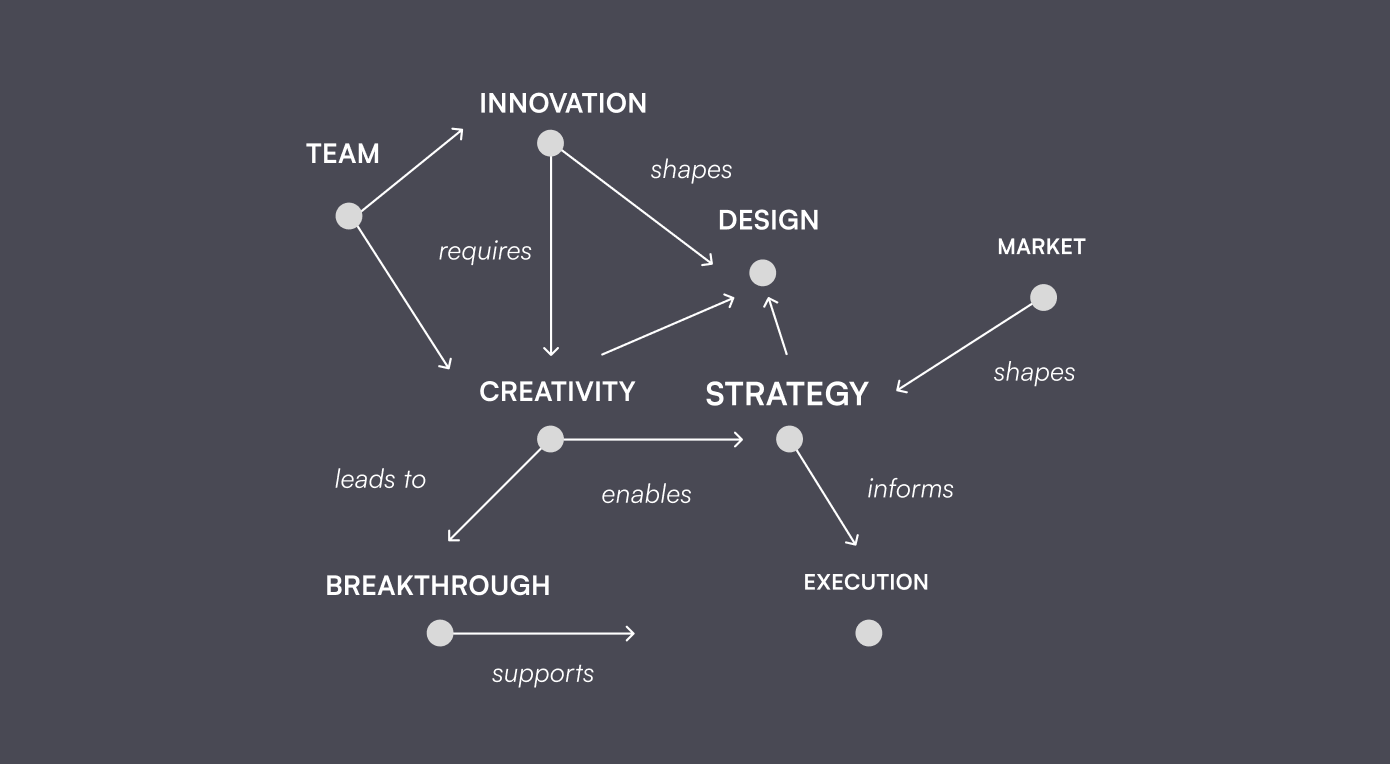

Relational Insight with Knowledge Graphs

Where vector search handles similarity, knowledge graphs handle structure. They define how concepts relate — e.g., "creativity enables innovation," or "feedback loops improve performance." This gives AI agents the ability to reason, trace dependencies, and make logical inferences. Emerging systems like GraphRAG integrate this graph structure into Retrieval-Augmented Generation, enabling richer, more transparent outputs that reflect real-world relationships.

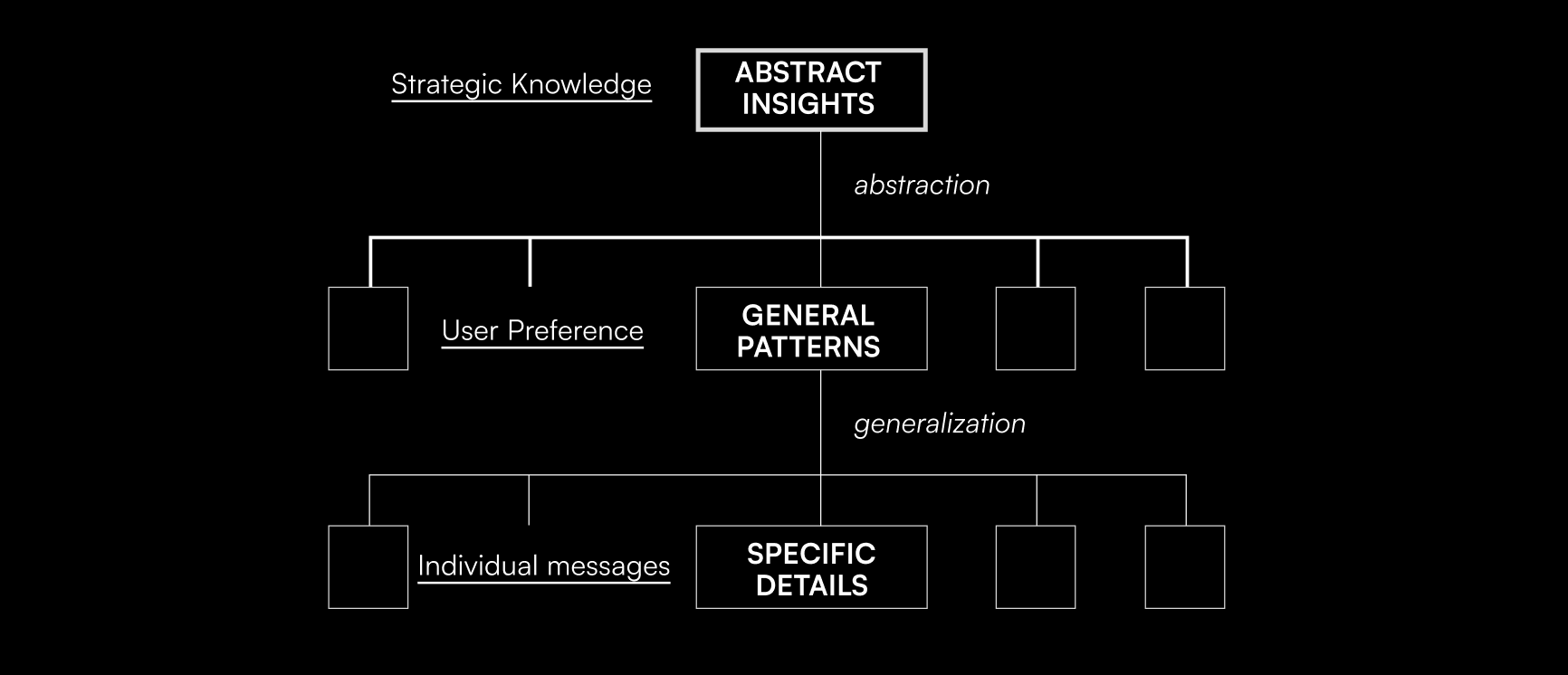

Layered Understanding with Hierarchical Memory

Human cognition works in layers — from short-term memory to long-term understanding. AI agents now follow suit. Hierarchical memory systems allow agents to capture moment-to-moment interactions (e.g., session state) while building up generalized knowledge over time (e.g., trends, themes). Tools like LangGraph facilitate this structure, enabling both short-term reactivity and long-term adaptability in one system.

Toward Agents That Evolve with You

When these memory systems work together, AI agents shift from being tools to becoming collaborators. Here's how that evolution plays out:

-

Remember preferences and past decisions. With long-term memory, agents can recall explicit preferences — like your preferred communication style or past feedback decisions. This helps avoid repetition and enables personalization. Imagine an assistant that recalls how you structure reports or what you approved last quarter — it's more efficient, and more human.

-

Connect related events across time. Using hierarchical and graph-based memory, agents can link conversations across time. Ask for a campaign idea in January, revisit metrics in April, and your agent can trace the storyline — connecting goals, actions, and results into one cohesive thread.

-

Anticipate needs and patterns proactively. The most powerful agents won't just respond — they'll prepare. If your pattern shows quarterly board meetings, your agent might proactively generate KPIs before you ask. This predictive layer is what makes AI truly assistive.

Together, these systems allow AI to learn how you work — not just what you say. Over time, your agent becomes more adaptive, more relevant, and more aligned with your evolving needs. Memory, when architected well, becomes a pathway to intelligence that's not just reactive, but reflective.